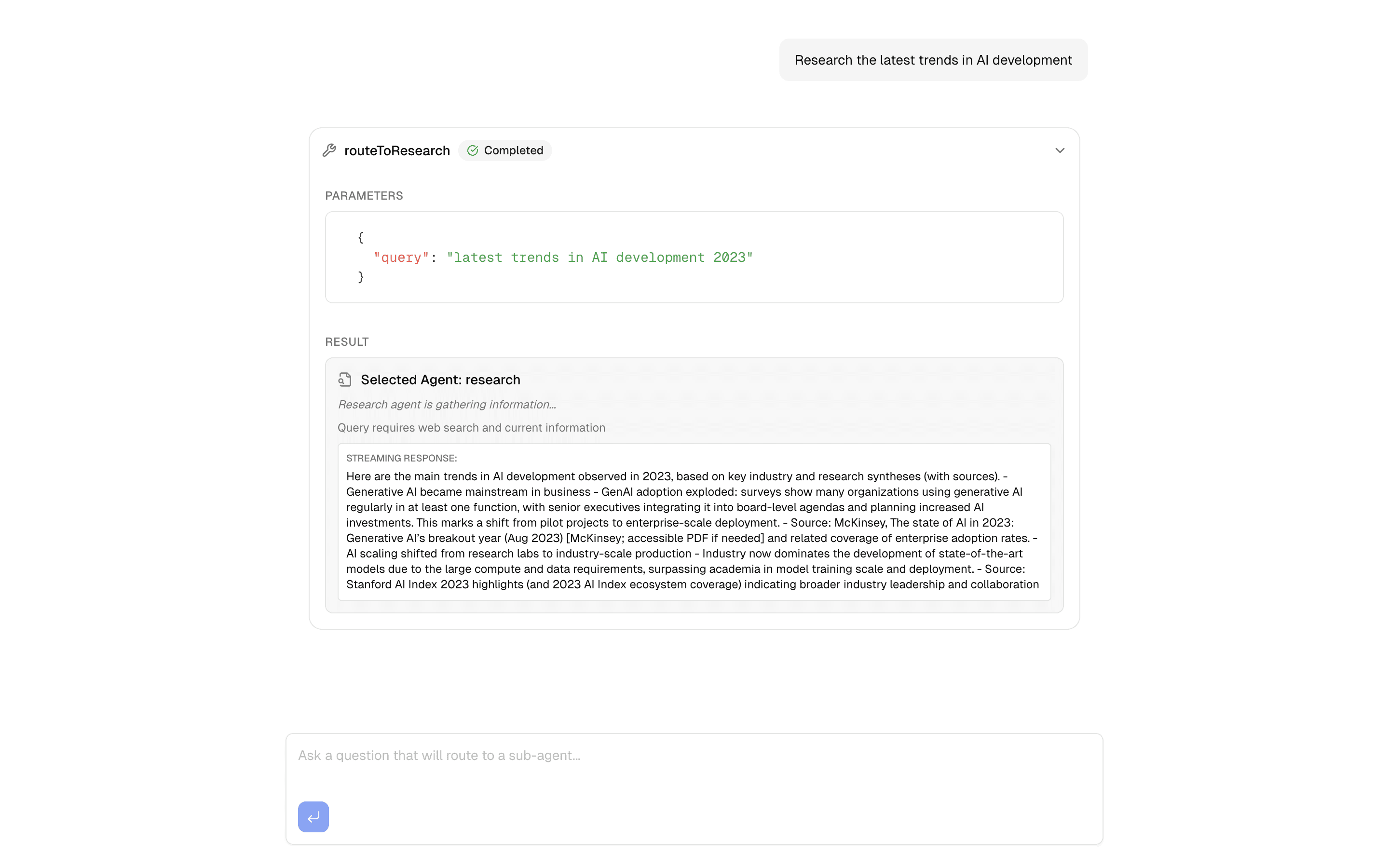

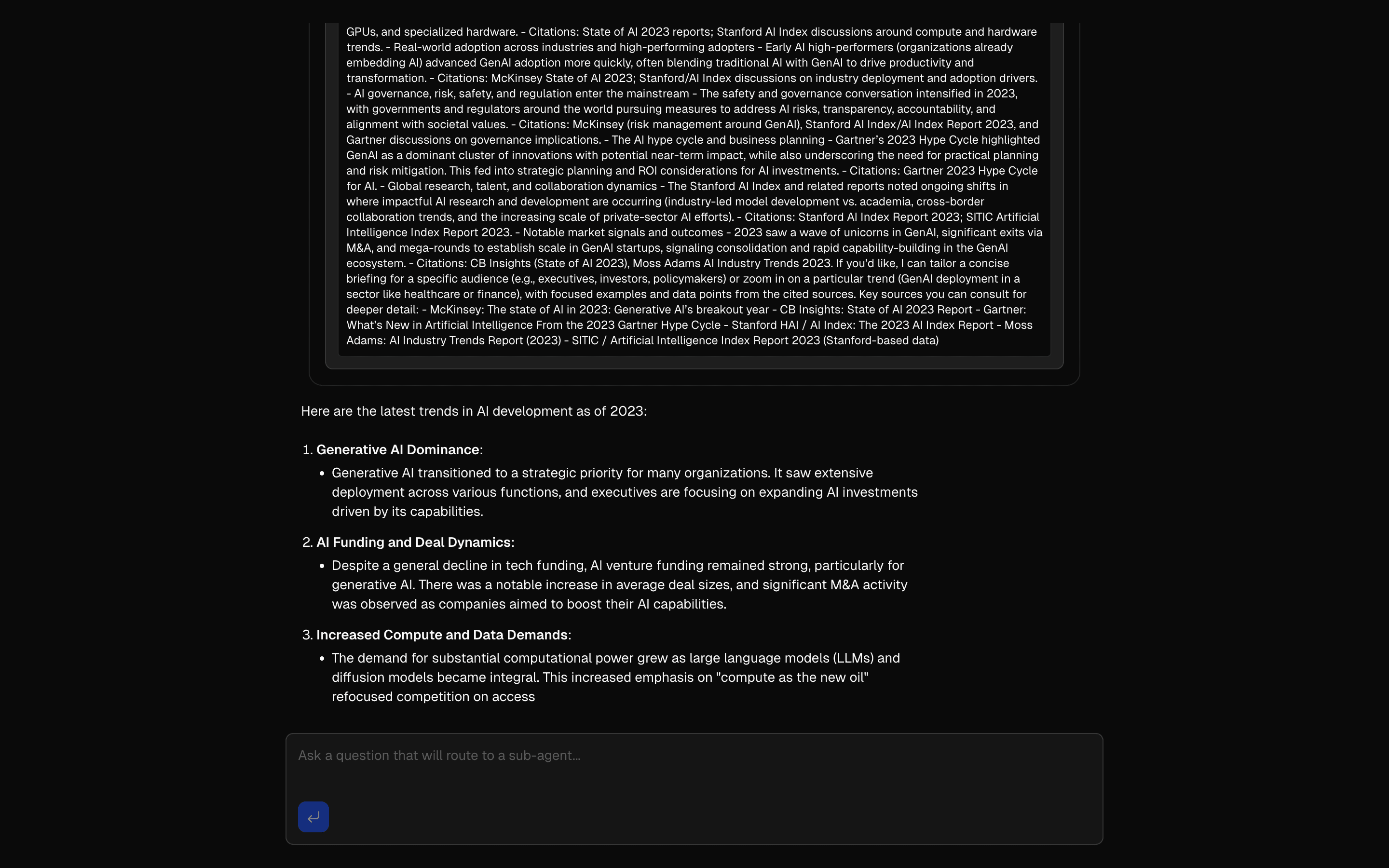

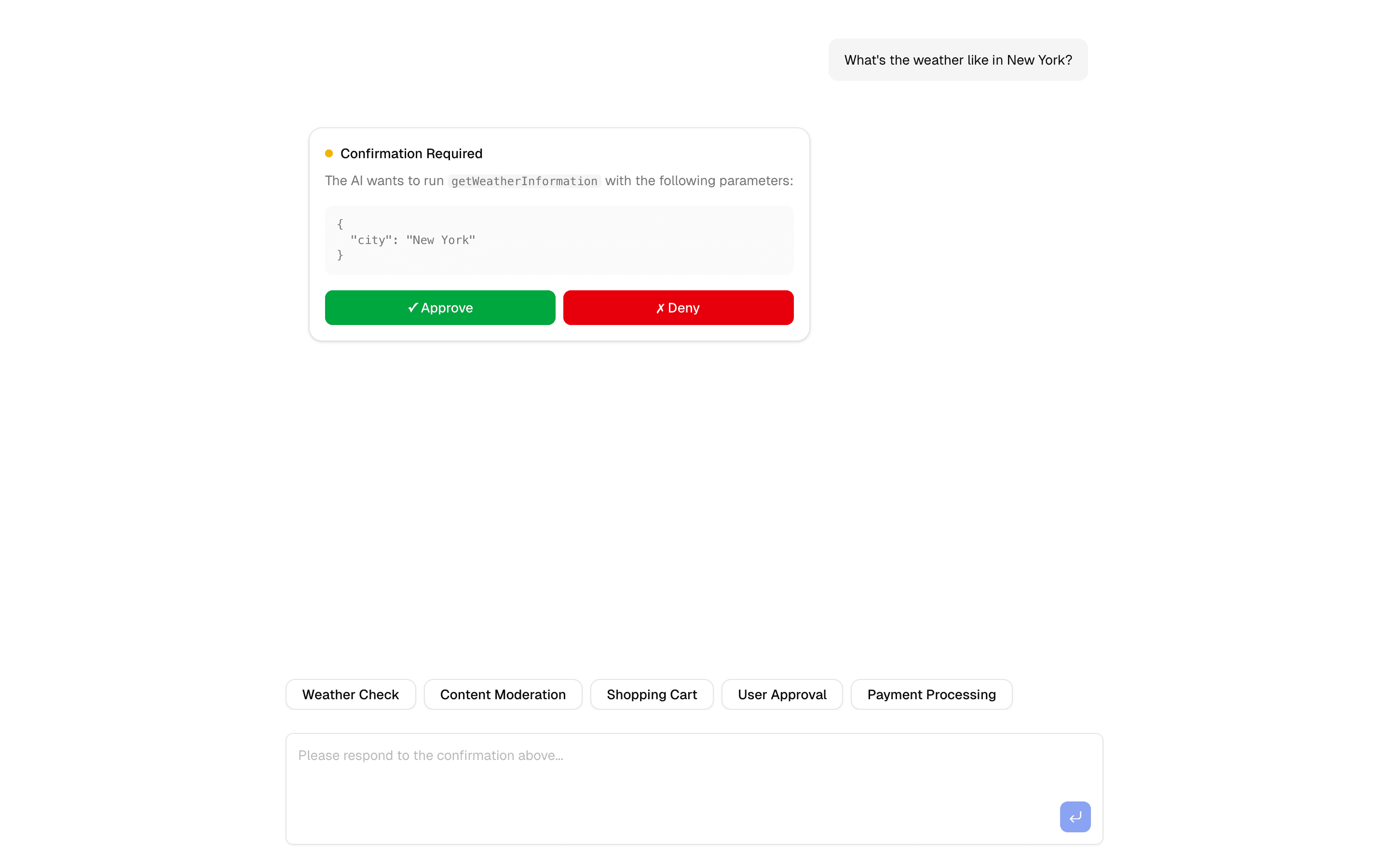

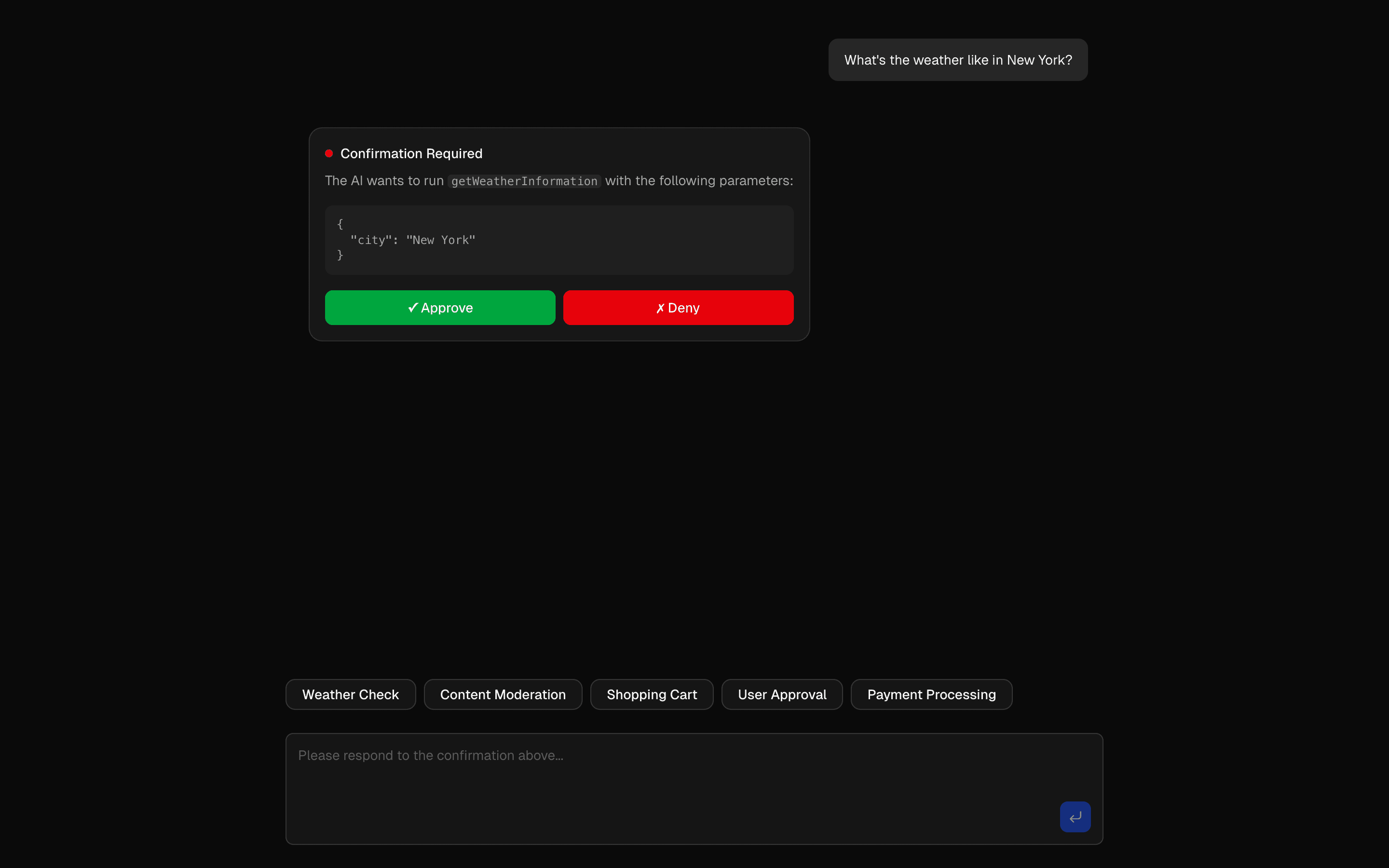

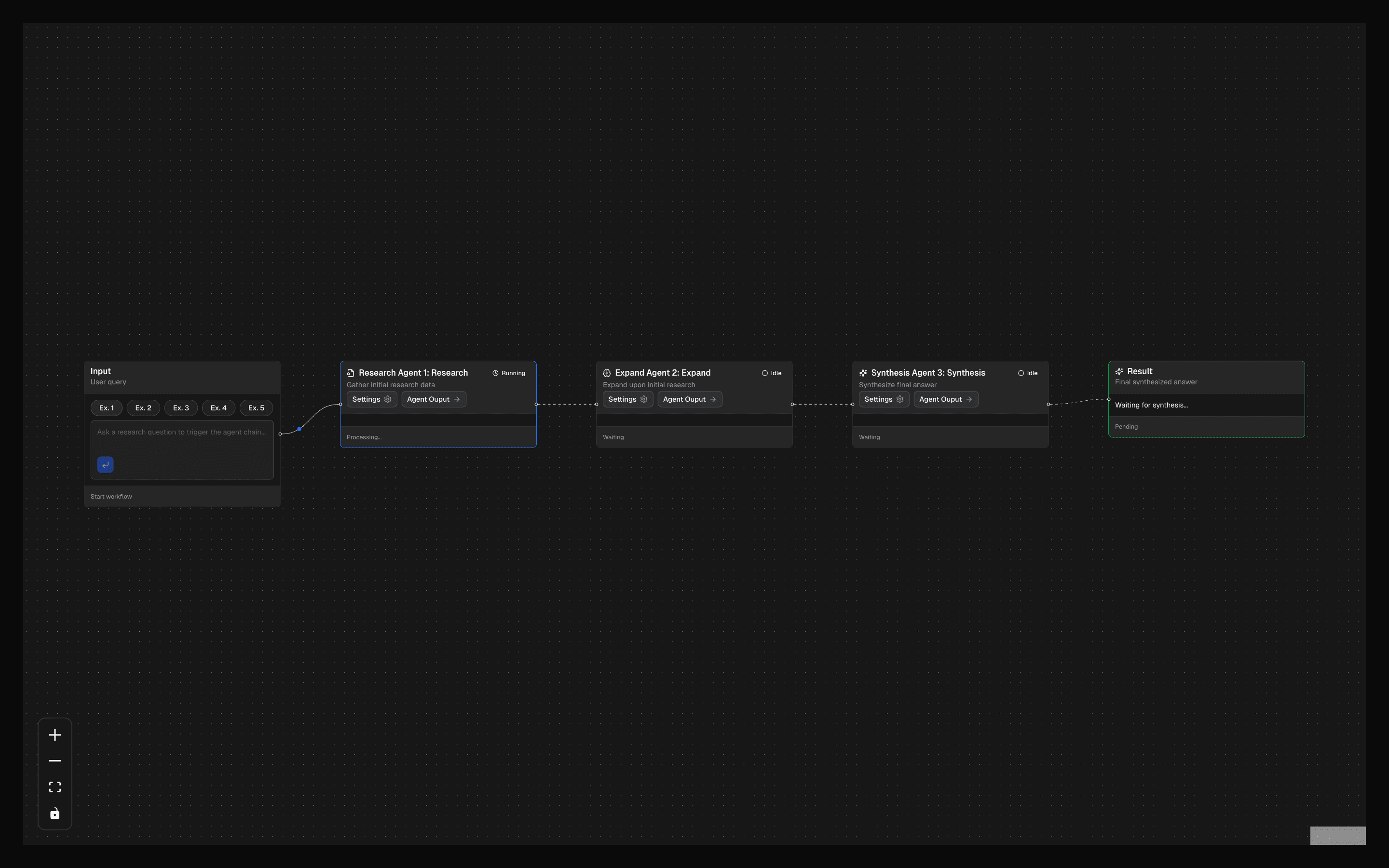

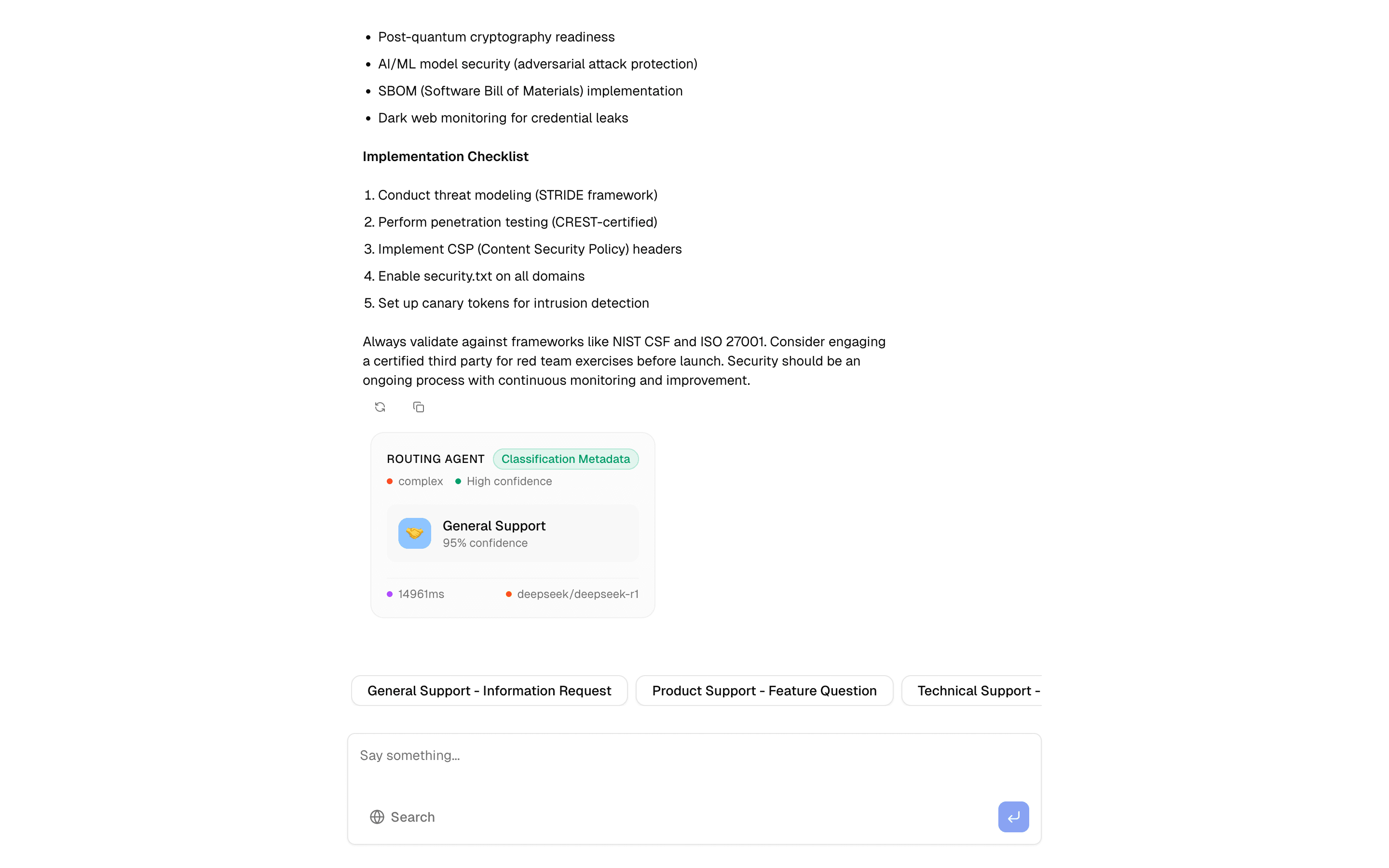

The routing pattern is the front door of any multi-agent system. Instead of sending every user message to a single monolithic prompt, it classifies the input first and dispatches it to a specialized sub-agent — each with its own system prompt, model, and toolset.

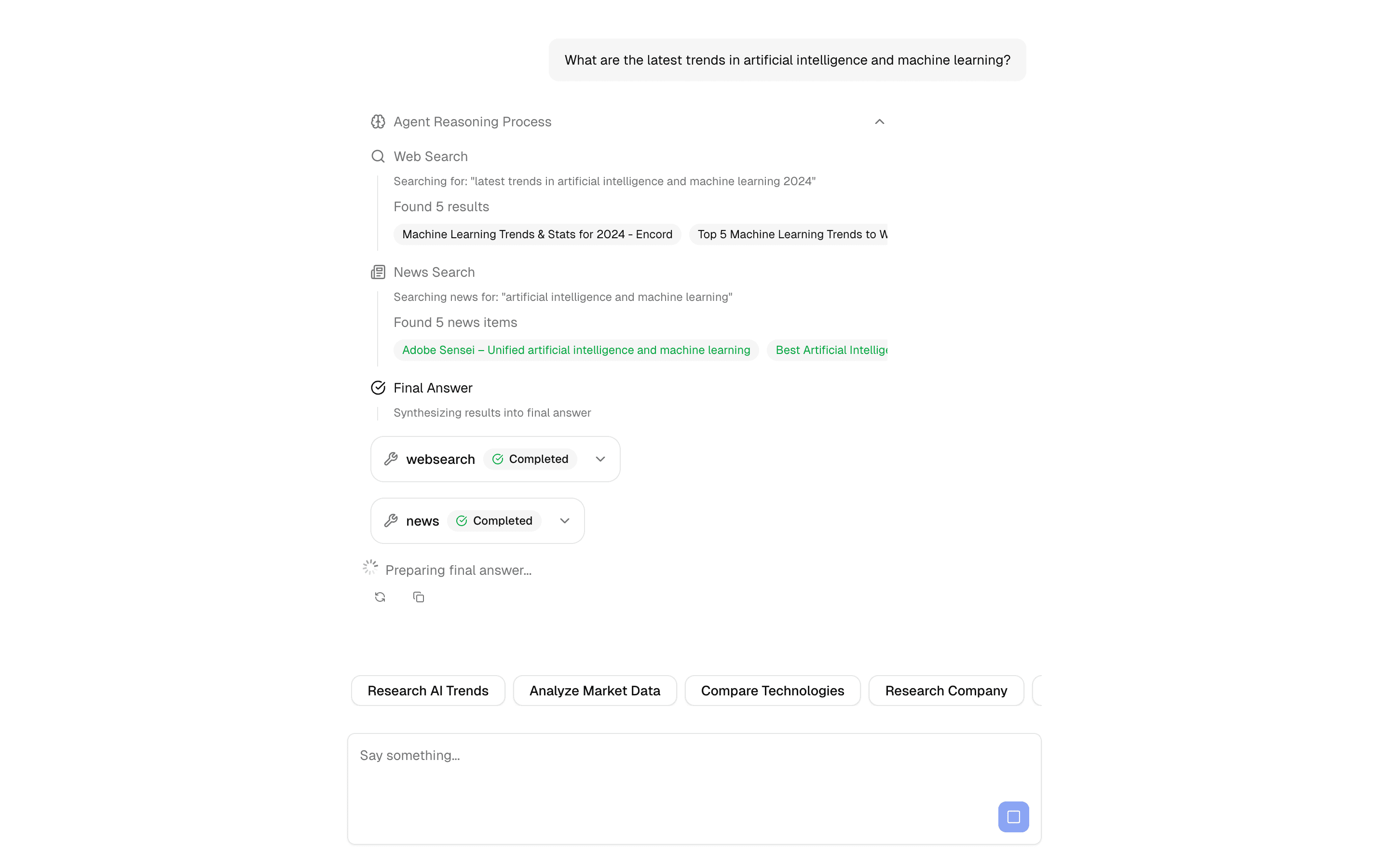

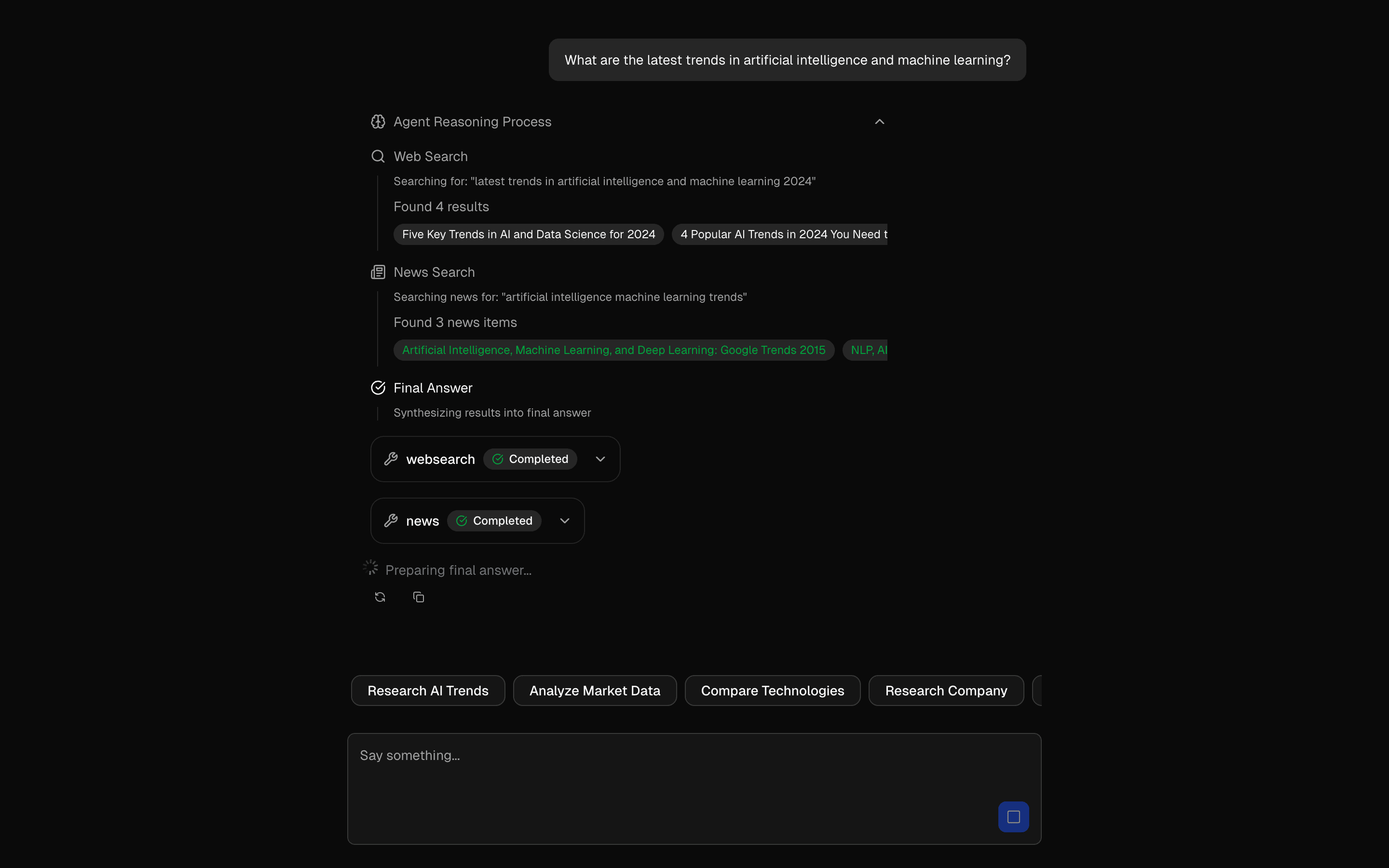

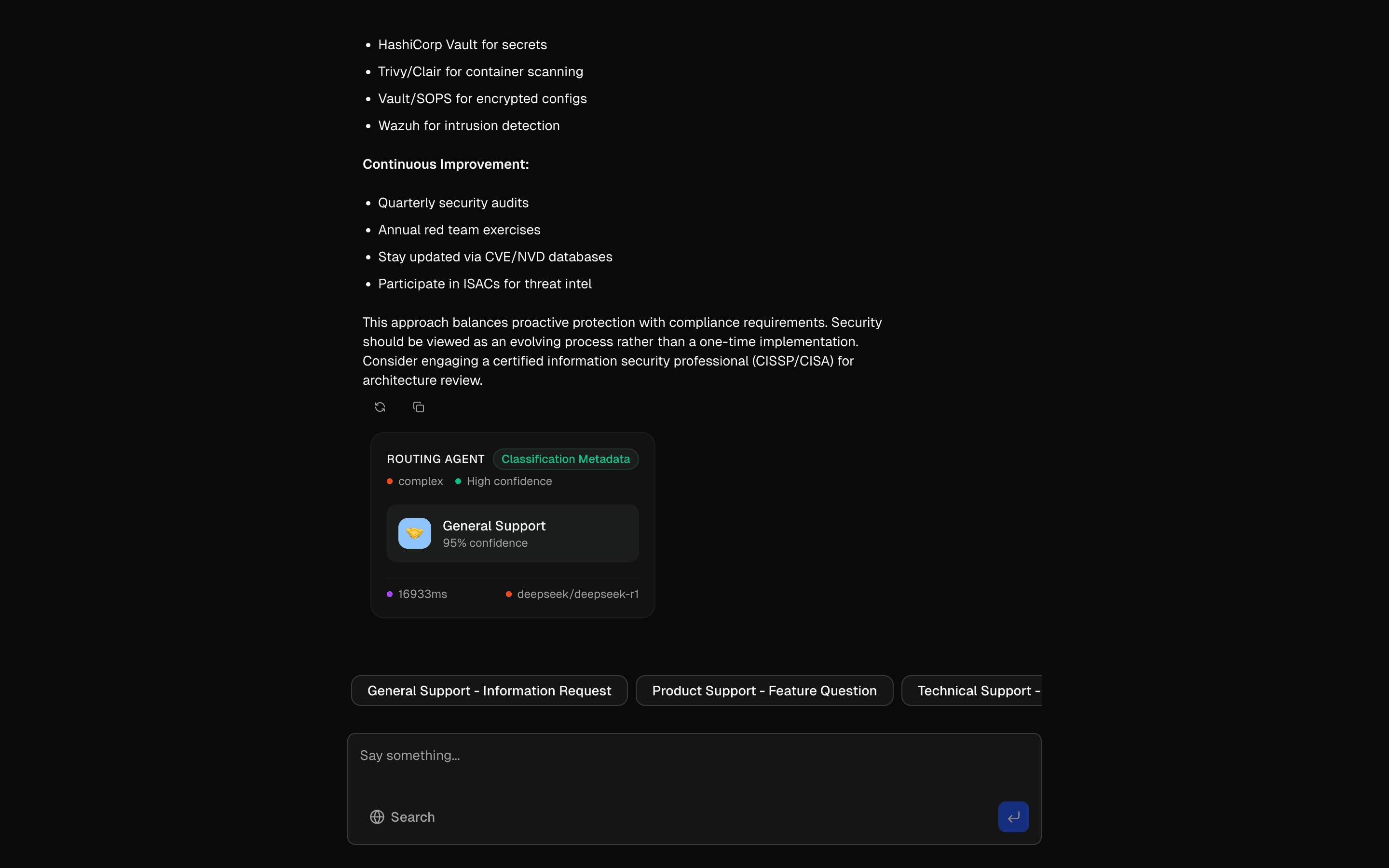

At the core is a classification step powered by generateObject. A Zod schema defines the possible intent categories (like "technical", "billing", or "sales"), and a fast, inexpensive model makes the routing decision. The downstream agent then handles the actual response with a more capable model if needed.

The key architectural insight is separation of classification from generation. The classifier runs something like GPT-4o-mini to keep latency under 200ms, while the responding agent can use a heavier model for quality. This keeps costs manageable at scale — you only pay for expensive inference on the messages that need it.

This pattern also includes load balancing across providers and graceful fallback handling. If the primary model is unavailable, the router can redirect to an alternative without the user noticing. Use this when your application serves multiple distinct user intents that benefit from specialized prompts or different model configurations.